Data sets being used in medicine today, with massive gaps and biases, will bake this bullshit into the code of AI within the biomedical industry; is there a doctor in the cloud?

In 2017, there was a case before the Texas Supreme Court, wherein one of the lawyers was trying to cite Wikipedia like it was a dictionary, relying on its definition of a term to validate an argument. To quote the Technology & Marketing Law Blog of Eric Goldman:

“The Texas Supreme Court…opined on the credibility and validity of Wikipedia as a dictionary. TL;DR = the Supreme Court says don’t treat Wikipedia like a dictionary. Any court reliance on Wikipedia may understandably raise concerns because of “the impermanence of Wikipedia content, which can be edited by anyone at any time, and the dubious quality of the information found on Wikipedia.” Peoples, supra at 3. Cass Sunstein, legal scholar and professor at Harvard Law School, also warns that judges’ use of Wikipedia “might introduce opportunistic editing.”

So simple enough; Wikipedia is a living document, shouldn’t be used as a dictionary, could be hacked by opportunistic/interested/vested parties on a whim, so it’s suss.

But this got me thinking about algorithms and AI; how they’re snarfing up the entire internet (which isn’t a true sample of all of humanity) indiscriminately, and then I thought about the imbalanced/biased inputs that exist in our big data today, on sites like Wikipedia, and how those might turn into a big time headache when people start relying on AI for credentialed information.

Right below the passage above, was this:

Wait; “…overwhelmingly male, under 40, and living outside the US?”

So that spun me off into wondering of population biases in big data, which are fed into black box AI, which led me to an article from npj Digital Medicine, entitled; “Sex and gender differences and biases in artificial intelligence for biomedicine and healthcare,”

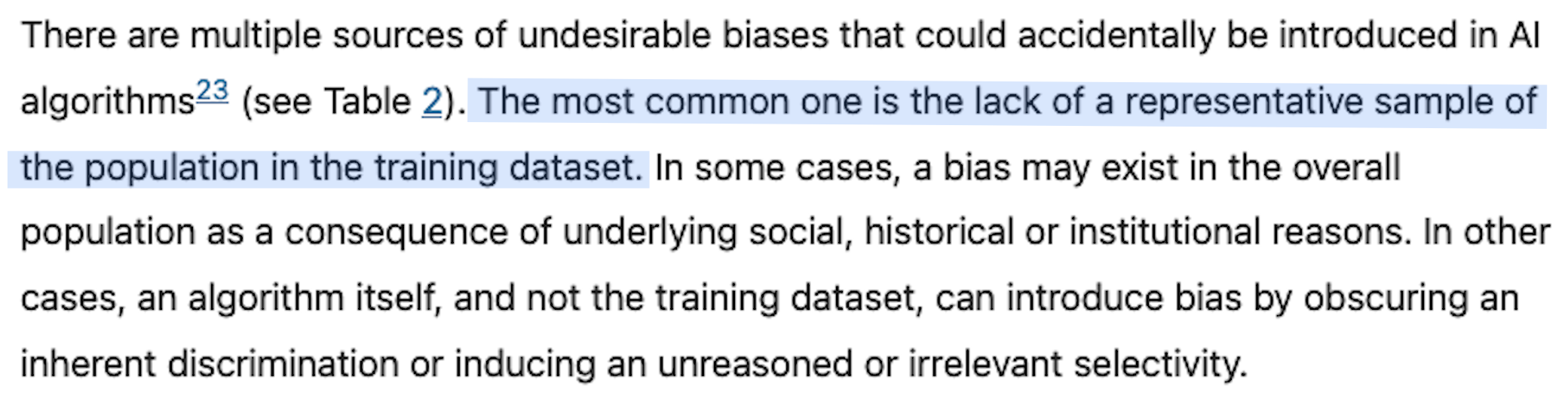

So simple enough; if you don’t have data sets from a balanced, full-spectrum representative sample of the population, then scaled analysis and insights derived from AI won’t be applicable across these populations.

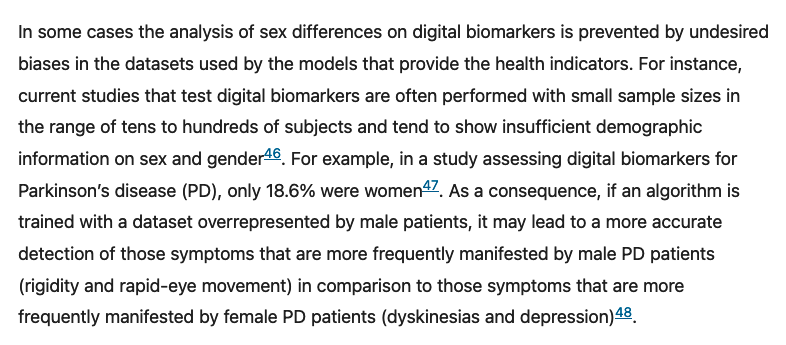

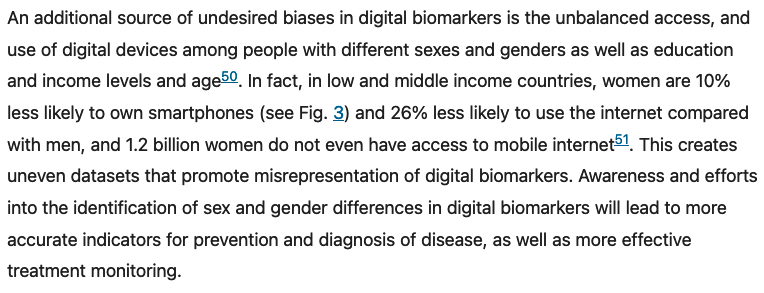

And then this section about digital biomarkers (which are physiological, psychological and behavioral indicators based on data including human-computer interaction (e.g. swipes, taps, and typing), physical activity (e.g. gait, dexterity) and voice variations, collected by portable, wearable, implantable or even ingestible devices) blew my wig back.

And then this kicker….

Reading the full article, which is filled with illustrations and examples of the qualitative gaps in the data layers of medicine and healthcare, and there is but one conclusion;

There is no data in use, or even in existence, in the entire biomedical and healthcare universe of TODAY, right now, that informs how these industries various products, services, pharmacological solutions, etc, are applicable to individuals of every shape, sex, size and substance.

And if we don’t have the data sets and contextual inputs, or ability to appreciate/identify/remove the biases within them, from every human on the planet; not only in every size, shape, sex, but also mood, moment, DNA, background, physical environment, habits, schedules, etc. how would we ever engineer a medically-specialized AI that could generate healthcare protocols that would be applicable to all those people in all those states and varying factors?

Ok, so, simple enough; there is a massive gender/people/context gap in the entire corpus of data afforded to the entire healthcare ecosystem, which is already generating unreasoned and irrelevant selectivity in it’s conclusions/prescriptions/innovations. Same for any AI built off this data, or lack thereof.

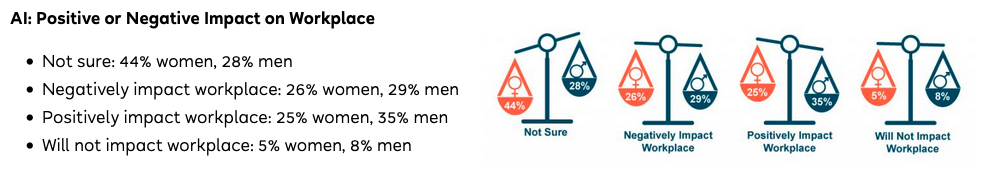

And then I stumbled across an interesting article in FlexJobs, titled; “The AI Gender Gap: Exploring Variances in Workplace Adoption” which felt like a nice way to tie it all up:

FlexJobs polled over 5,600 working professionals about AI adoption, and when asked if they think AI adoption will be positive or negative on the workplace, men reacted more favorably, but the women overwhelmingly answered; “not sure.”

Given the imbalanced, biased, and misabused state of big data, I also wouldn’t be sure of AI’s impact on anything other than turning the existing gaps in our current data layers into chasms. And maybe, just maybe, we should seek out and listen to the many voices missing from the data sets of today.