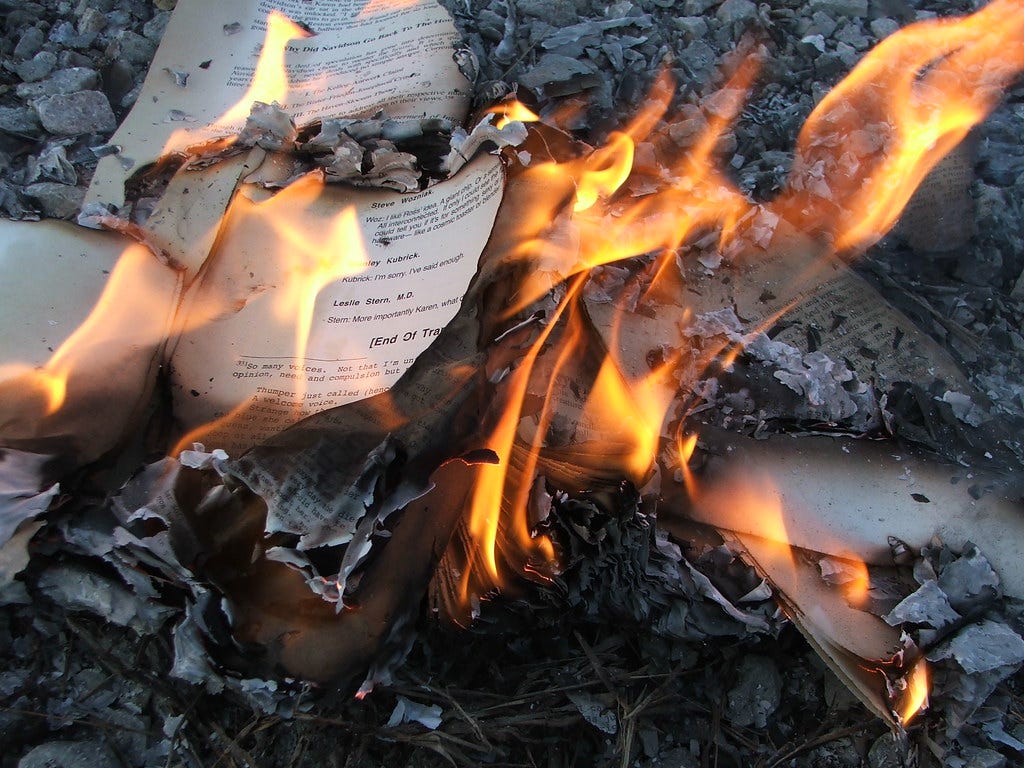

“Those who don’t build, must burn.” – Ray Bradbury, Fahrenheit 451

Woke up wondering what the people using AI for creative substitution are REALLY doing? Besides cutting costs and increasing productivity, why would we seek out something that requires culture to be flattened and burned into code, to then be manipulated to fit any framework?

I recently watched the 2018 film adaptation of Ray Bradbury’s “Fahrenheit 451” on HBO, and a line of inquiry sparked in me as I watched the story unfold; is generative AI ushering in (and transmuting into) a new era of book/culture burning?

If you aren’t familiar with “Fahrenheit 451” you need to be, because it’s one of Bradbury’s most prescient and seminal works. Briefly, it is about a future American society that has removed/captured/contained all the cultural treasures of humanity, and follows a “fireman” who becomes disillusioned with his duty to burn books and destroy knowledge, and pivots to trying to save them.

Bradbury’s reasoning for writing the book changed over time. In a 1956 radio interview, Bradbury said that he wrote the book because of his concerns about the threat of burning books in the United States. In later years, he described the book as a commentary on how mass media reduces interest in reading literature. In a 1994 interview, Bradbury cited political reasons, relating the work as a response to “thought control and freedom of speech control.”

I think this current Gen-AI era we’re existing in could be the full realization and penultimate amalgamation of Bradbury’s cautionary tale.

Gen-AI as fire

The all-consuming nature of big data practitioners has always been to collect every possible bit of as much information as you can fit into a chip. You need to feed your training sets everything if you want your AI to do anything.

In the computing world, there is something called “burn-in” which is the process by which components of a system are exercised before being placed in service.

I believe that all the data that we gleefully fed into AI tools at the outset of this latest techno-boom have been burned-in to the architecture and outputs of these machines.

The weird part is that, historically and on the whole, book burning has negative connotations. But in this AI-enabled version of Fahrenheit 451, we are all firemen, and we’re happy to shove everything and anything into this forge of the future.

Once we’ve burned all of humanity and it’s cultural treasures into code, we will be better for it; not because we got rid of them, but because we gave it to a higher intelligence that will in turn give us super-human abilities to take all this knowledge and transform civilization for the better. Make society better functioning, launch marketable goods and products faster, generate ground-breaking ideas in seconds, and save the planet.

The more we burn, the brighter our future.

Gen-AI as a cultural suppressant

From what we’ve seen, the people running these AI transformers don’t have any compunction violating copyright protections, and don’t discern any differences between data and code.

Paradoxically, AI is a very unintelligent tool, indiscriminately sucking up and then spitting out results with little attention paid to details, facts, or contextual realities.

When we burn information (books, music, film, art, news, everything) into AI we essentially remove the media from the physical medium, and are left with the ashes.

What we get then is a flattening and codification of culture that dysfunctions at scale.

When you take your experience, context, and background, and read “A Thousand Years of Solitude” by Gabriel Garcia Marquez, or listen to Mahler’s 9th symphony, or watch Stanley Kubrick’s “The Shining,” you might take away something completely different than the critical response, synopsis, and summary of these works available on Wikipedia.

Since AI is only capable of replicating/regurgitating data within its training set, it necessarily misses all of the softer, subconscious impacts of our engaged experiences with the materials in question.

As we rely more and more on AI to be a single source of irrefutable truth, we are excising the critical factor of human response and reflection to truth itself. Here the cultural suppression of AI.

“Cram them full of noncombustible data, chock them so damned full of ‘facts’ they feel stuffed, but absolutely ‘brilliant’ with information. Then they’ll feel they’re thinking, they’ll get a sense of motion without moving. And they’ll be happy, because facts of that sort don’t change. Don’t give them any slippery stuff like philosophy or sociology to tie things up with. That way lies melancholy.”

– Fahrenheit 451

Gen-AI as thought control

I recently wrote about the legal findings that bar Wikipedia from being used as a dictionary. Since Wikipedia is a manipulatable source of information, and curated by contributors that have bias, it cannot be relied on to verify hard facts. But more importantly, Wikipedia, and all the available information on the internet itself, does not represent a full-spectrum sampling of humanity and has massive gaps in the data layers.

AI is not the dispassionate, disconnected database we think it is, but a compendium of all our shortcomings and biases. AI isn’t something other than us, it is us, or more pointedly, it is our data. And those with the easiest, broadest, fastest access to feed the most inputs or “facts” into AI databases (or the datasets used to train/fill them) will be able to set the tone for the outputs.

AI is essentially a statistical modeling tool, based on means, medians, averages, and GenAI is a prediction engine that uses this toolkit to produce desired results. Problem is, once any information has been burned in, it’s abstracted away and purged from the source material. And if there are any data gaps or issues within the black-box AI framework, it’s near-impossible to know.

It seems alarmist to claim that AI is thought-control, but it doesn’t require a sci-fi leap of faith to substantiate this idea.

Cultural products are a technologically mediated form of thought control. When you read words someone wrote, or listen to music someone recorded, or watch a film someone made, you are able to interact with the creators, their ideas, perspectives, feelings, transmogrifying your whole being, both positively and negatively.

In Fahrenheit 451, the impetus behind book burning bubbles up to a Ministry, that has decided to (after disastrous civil war) erase, replace, and modify culture to be more conformed and controllable.

For peace, they got rid of all the passion. For safety, they unloaded all the rapid fire thoughts of culture into the dustbin. For stability and predictability to reign, they had to incinerate the thoughts and reflections of unstable minds and mercurial thinkers.

As we continue to burn-in all of our cultural outputs into a repository that is just a tool, we should question the end-use case for such a tool and how the powers that hold the most sway over the directionality of AI might use, and abuse, that toolkit.

“Don’t ask for guarantees. And don’t look to be saved in any one thing, person, machine, or library. Do your own bit of saving, and if you drown, at least die knowing you were heading for shore.” – Fahrenheit 451