Quick-fire musings on how information becomes wisdom, and how agendas prevent agency

Information is noisy, widely available and, on ad-supported media/platforms, typically weighted or freighted with meaning or commercial purpose, or intent. Easiest to attach to, acquire, get activated on, and apply, usually wrongly on all accounts. Information without knowledge can’t be wisdom.

Information can be gamed and if so, fools the audience.

Knowledge is information activated within circumstances. Challenging to acquire because it requires discarding bad information, which you might have become attached to. Knowledge without information can’t be wisdom.

Knowledge can be gamed, and if so, fools the players.

Wisdom is experience. when knowledge is applied to the external world, society, systems. Information passed through experience to externalities. Wisdom is only expressed through time, and can’t be rushed.

Wisdom can be gamed, and if so it fools the game-makers. Who feed information of varying quality, back into the information pool.

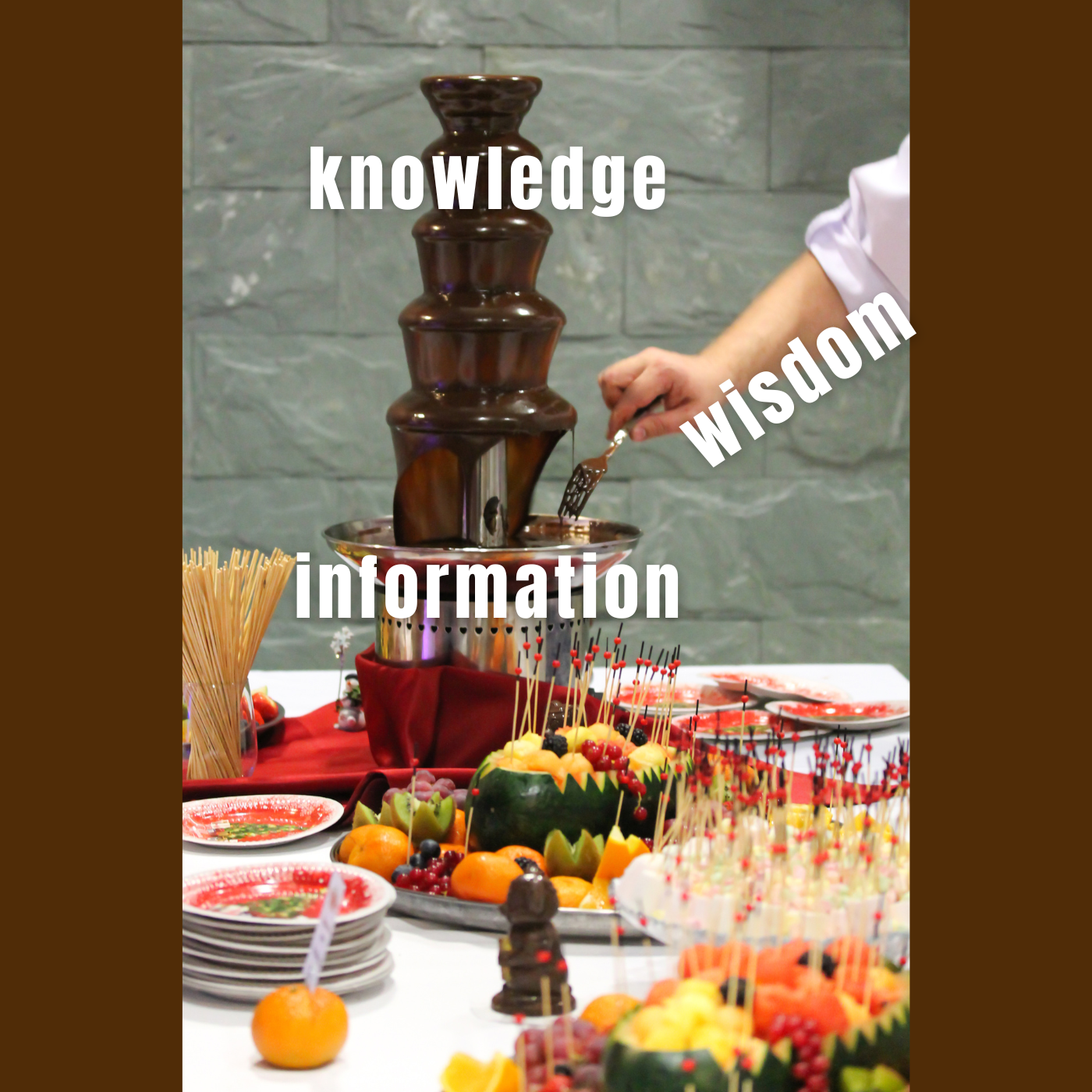

Now…think of a chocolate fountain.

Information is the chocolate—plentiful, tempting, but often low-quality or full of junk.

Knowledge is the pump and the tiers—giving shape and structure to the flow. But if the chocolate’s full of garbage, the system clogs.

Wisdom is knowing what to dip.

What’s this got to do with AI?

In a world where AI increasingly shapes how information flows, we must ask: is it helping us build wisdom, or simply accelerating agendas?

My thesis is that the agentic future of AI is, in truth, a future of AI-empowered agendas—most likely shaped and directed by the dominant model owner.

An agenda is a list of things to be done—a predefined course of action. Agency, by contrast, is the capacity to exert influence, to choose freely, to think and act with autonomy.

When agendas multiply, agency diminishes. If everyone holds an agenda, no one holds agency. As agendas rise, they begin to override the open flow of information, knowledge, and wisdom.

Agenda becomes the lock. Agency, the key.

Agency is not the freedom to create more agendas—it’s the freedom to think, feel, and explore beyond them. It is the capacity to encounter the unknown without a predetermined outcome.

And yet, the technology we are being sold as “agentic” is anything but. It does not amplify agency—it industrializes agenda. It gives us more lists, more tasks, more structure. The illusion of control. The acceleration of output.

But if the unchecked proliferation of agendas continues, it ends in only one place: a singular agenda. A dominant directive. And in that world, agency vanishes.

What if what we’re calling agentic AI is, in fact, agendic AI?