How myths behind creativity and the resourceful genius are undermining long-term marketing strategy

“Time and budget are tight for this project. You’re creative, you’ll think of something. What we’re looking for Jake, is ‘cheap genius.’” – My Life

As a creative person, my ability to think fast and make connections has helped advance my career, propelled me from gig to gig. Along the way I’ve been haunted by the spectre of MacGyver, the resource-strapped (read; alluringly cheap) genius of 90s American TV, because I think he personifies a few things wrong with marketing and business.

Why?

Think about this…

Of the first companies that appeared on the Fortune 500 in 1955, only 53 held a place on the list in 2018 (-89.3% success rate)

A business culture obsessed with risk and cost management, rife with rampant short-termism and shortening CMO lifecycles

The crumbling foundations of ‘expertise’ and break-up of industrial knowledge silos

A ‘gig’ economy filled with entrepreneurial DIY-life-coaches-gurus-hackers-AI-agents

The belief that the next big platform or IPO or genius idea will come, like it always does, from a random, scrappy teenager’s garage;

and MacGyver….

Mix it all together and it’s plain to see, the belief that MacGyver-like-business-saving genius is cheap, widely available, and flourishes during to-the-wire timelines, is a bad brew for marketing and business to be sipping on.

I’ll first explain who MacGyver is, unpack what I’m calling The Cheap Genius Theory, and then we’ll explore ways to define and disrupt this damaging trend.

Who is MacGyver?

A bent paper clip can defuse a ballistic missile. A potato and some cigarettes are all you need to thwart a high-tech prison’s security system. Chewing gum alone can defeat an entire militia.

These aren’t just thought-exercises, these seemingly implausible scenarios all played out during the late 80s TV action/drama MacGyver.

There are MacGyver fansites dedicated to celebrating the genius of the hero, featuring full breakdowns of all the problems he’s applied his time-strapped, cost-effective, MacGyver-ness to, in all seven seasons on CBS from 85′ – 92′.

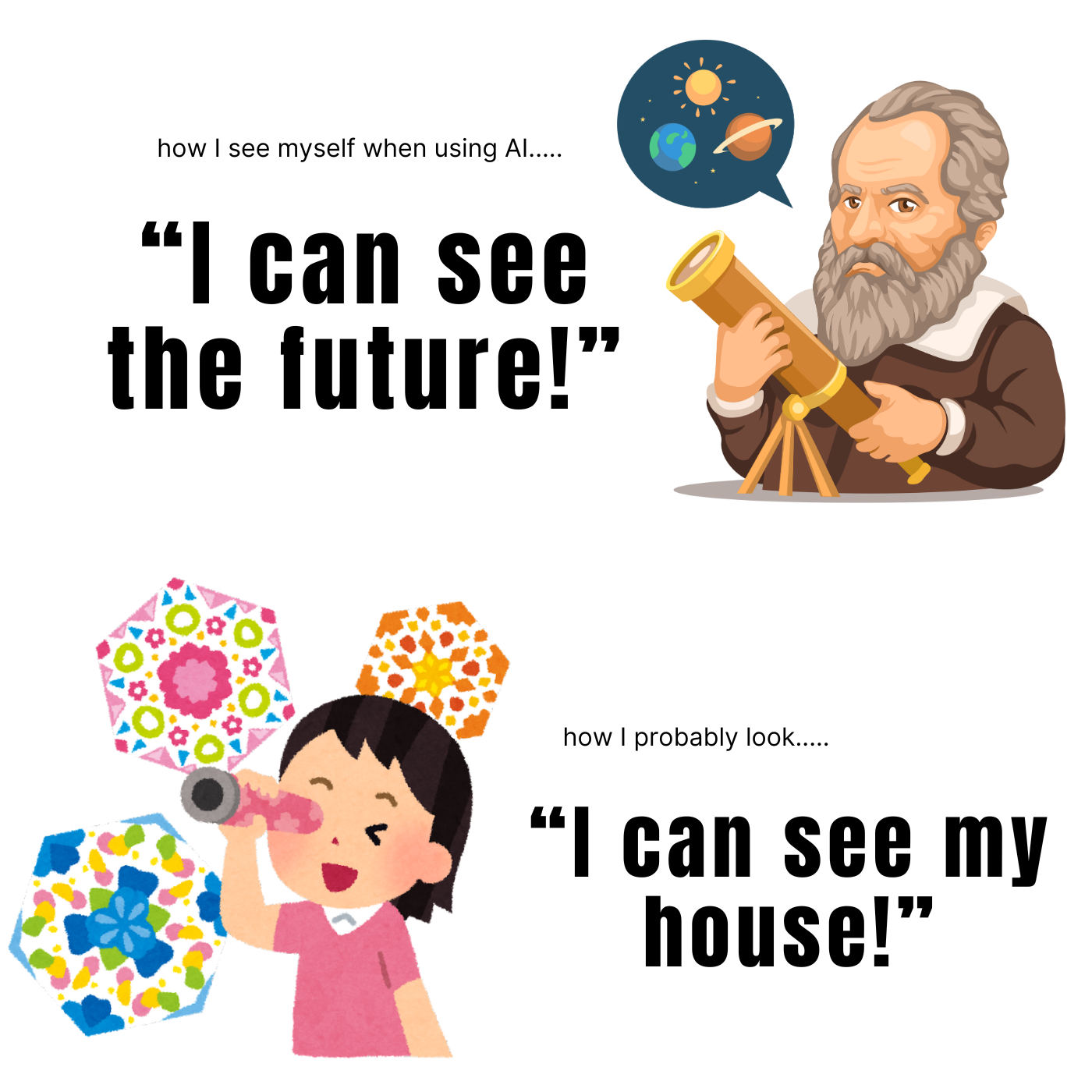

While MacGyver seems to portray creativity very favorably, it’s my belief that MacGyver perfectly personifies the perceptual problems around what creativity and “cheap genius” is, where it comes from, what resources it needs, what it’s worth, and how creative ideas can be best applied in business and life.

The Cheap Genius Theory (CGT) explains why business leaders approach problem solving, creativity, advertising, and marketing the way they do – which is hoping MacGyver shows up, or worse, thinking they’ll pull a MacGyver and cut the right wire once the countdown on the bomb begins.

There are five concepts to The Cheap Genius Theory….

HUMANS ARE CHEAP

Although a premium option exists for almost every good or service, (the best house/car/President, the best advice, the best hummus!) humans will almost always choose the least-crappy, less-likely-to-fail option. Not the worst, but the least worst.

It’s called ‘satisficing’ and it’s an irrefutable part of human behavior, it’s based on strong empirical data, and it’s the best explanation around for why people make decisions that don’t make sense in the long run.

Sure we could defuse the bomb a traditional way, but let’s try this paperclip first.

GIVEN RESOURCES, “EVERYONE IS CREATIVE”

With so many brainstorms and Post-It Notes and examples of startups with humble-and-hooded-sweatshirted beginnings, genius ideas spawned from simple creative thinking, are seemingly everywhere and cheaply available.

Many are the professional articles outlining how managers just need to unleash their team’s creativity to solve their issues.

If creativity is widely available, that changes the way creativity is incentivized or incorporated into strategic thinking.

If we solved the last emergent problem cheaply, in a tight timeline, with a potato, then why budget in experts, time, or resources for this emergency?

If MacGyver can’t disarm the bomb in time, after this next brainstorm, I bet Glen from Accounting might be able to pull it off.

CREATIVITY LOVES SPONTANEITY

The myth that creativity is bolt out of the blue stuff, and always arises spontaneously is pernicious outside of the creative community.

There’s a belief out there, no thanks to MacGyver, that creativity is best catalyzed by time and resource constraints, and it’s usually only when your cognition is pushed to the wire do the explosively successful results take place.

Nope.

The true skill to develop in creativity is not time-constrained improv, but strengthening the mental muscles that connect threads between disparate channels of thought.

The results of creativity may be experienced and sharpened most thrillingly at the drop of a hat, but the skill that connects creative conclusions takes a long time to strengthen for ideation at a rapid pace to take place.

So in training for creativity it isn’t about developing quicker reaction times, but rather increasing mental flexibility in making farflung connections between wide swaths of human experience, accrued knowledge, cultural/social consciousness, and expressing it all through the chosen medium.

Just because someone HAS defused a bomb with a shoe in under 30 seconds, doesn’t mean that’s the most effective way to train for defusing a bomb.

CREATIVITY ISN’T ACTION OR OUTPUT – ITS CONNECTION

Creativity is an observation made in the minds of those that connect a creative action to genius, not in the action itself.

Creativity is judged not by the act, but by the audience, the norms it upsets, the expectations it disrupts – cheap genius is only good when someone is there to see it as genius, otherwise it’s just cheap.

Or worse.

Enthusiasts of the Cheap Genius Theory, wrongly think the purpose of creativity is to solely manifest actionable ideas, missing the point that the true measure of a creative idea is the interpretation and accepting ingestion of it by an audience, not just in the ideas themselves.

REAL CREATIVITY IS SOMETHING WE’VE NEVER SEEN BEFORE

Creativity is not a groundbreaking shattering of molds, but the art of combining recognizable molds in unexpected ways.

Something that had never been seen or experienced before would not strike a familiar chord in our souls, and so, it would just seem chaotic or out-there. You’ve heard Coltrane’s SunShip, you know what I’m talking about. If you’re not down, it’s a tough hang.

The Cheap Genius Theory highlights the skillful usage of a paper clip to defuse a missile, overshadowing the true skill in need of praise in creativity, which is a deep understanding of pre-existing concepts, in this case metallurgy and electricity, and how to quickly combine and apply them in novel ways and situations.

Ingenuity and spontaneous invention is only possible on the shoulders, brains, backs, thoughts, legends, laws, and expectation of the rules that have come before.

You can defuse the bomb with gum and its wrapper because you know about microchips, friction, the chemical properties of saliva, and the electrical conductivity of metallic substances. Without Galvani, Lavoisier, Curie, Jack Kilby, Wrigley, the ancient Aztecs that found chicle – all of that cheap genius wouldn’t be accessible.

Why this is bad, and what to do about it

Since creative thought is widely available, potentially cheap, and the product of chaotic spontaneity, businesses don’t plan, budget, or schedule for it, let alone reserve creativity a seat at the strategic table.

That’s bad.

Along with cheapening the importance of creativity and devaluing it’s place in business development plans, The Cheap Genius Theory’s most destructive influence is on marketing strategy.

Since disruption threatens every established business model, stakeholders across the world run their businesses knowing that companies don’t last as long as they used to.

But rather than strategically approaching changes to their business model, or solving business problems with creativity in the front end, CEOs are relying on MacGyver’s to save the business as is, they’re cutting costs where they can, and focusing their marketing campaigns on higher conversions with shorter observational windows.

Whether it’s Byron Sharp, Les Binet, Mark Ritson, Dr. Grace Kite, Rory Sutherland, or any of the other great minds in marketing today, the keenest people in the room agree, there is a rampant disease of short-termism with drastic side-effects on strategic, creative, long-term thinking in businesses today.

I think there needs to be a perceptual shift in the way we view creativity, and it starts by admitting the truth of The Cheap Genius Theory, and realizing our business development strategies are not strategies at all, but rather a string of implausible MacGyver-like fixes.

We have to admit CGT throws off our sense of how creative thought is best curated, generated, and applied to researching business problems. And we have to change the way we apply creative thinking to the research and diagnosis of solutions that aim to fix the business problems our companies face over time in a competitive marketplace.

Oh, that’s marketing.

And then, once we understand creativity, I think we need to dial it up!

After brand size, creativity is one of the most important factors in effective marketing and advertising. But because of The Cheap Genius Theory…

creativity is paradoxically the first thing everyone relies on to solve a marketing issue, but the last thing anyone plans on paying for.

Rather than relying on more MacGyvers to show up, I’d like to see creativity given it’s proper respect, timeframe, and proving grounds to demonstrate it’s ability to guide business development strategy. Businesses should curate a place of deep thought and research, develop the atmosphere of a mental gym that strengthens the connective and creative muscles in your team.

Without exercising both the fast and slow twitch muscles of creativity, research and execution, the impact of continual cheap genius fixes, no matter how ingenious, will yield ever-diminishing returns.

No one is arguing that resourceful creativity isn’t important to business development, but rather than utilizing creativity to strategically adapt our business models and marketing plans, we’re praising/seeking/utilizing versions of ‘genius’ that imprison us, and keep us mucking about with the same type of short-term fixes and cost-effective disarming methods, for a bomb that’s killed 89% of the last MacGyver-dependent businesses.