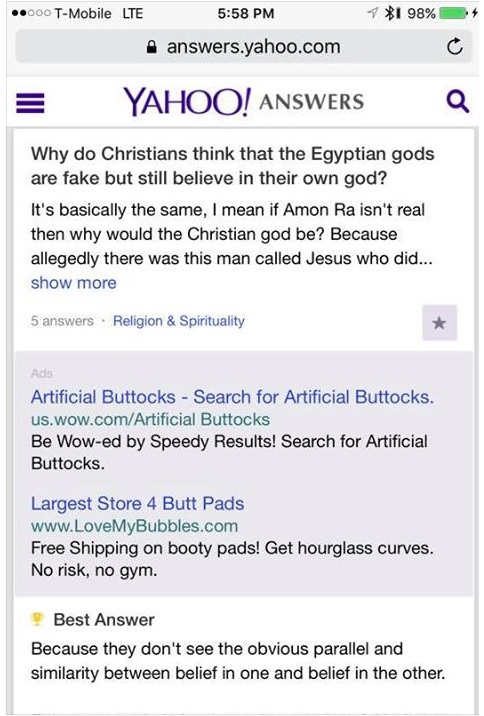

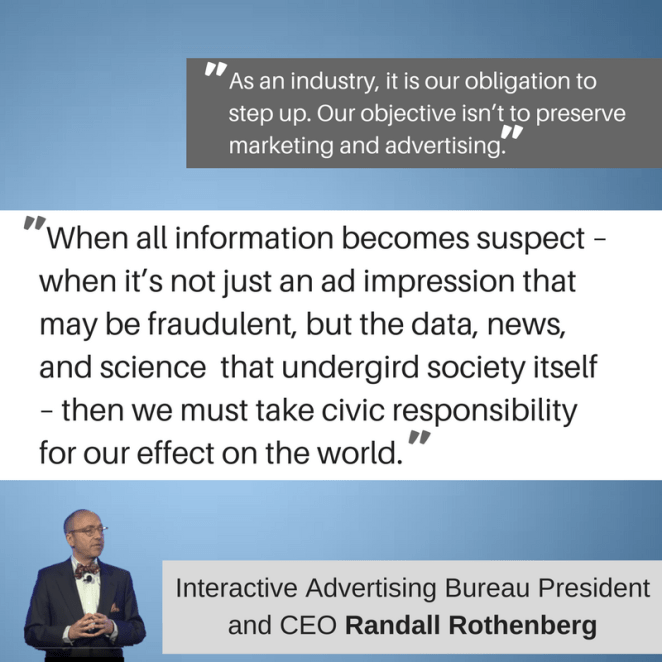

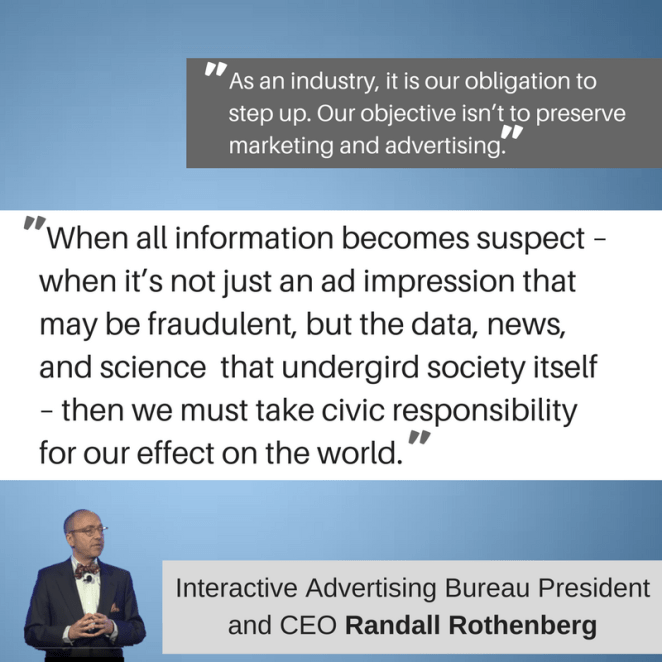

When Interactive Advertising Bureau President & CEO Randall Rothenberg called for an industry-wide commitment to fight “Fake News” at an Annual Leadership Meeting in Hollywood, Florida in January of this year, the response from the audience was mixed.

Randall Rothenberg went on in his truly inspiring speech, to elaborate the important role advertisers and marketers now find themselves playing. He outlined the myriad ways algorithms, big-data, and the eco-systems that prop up digital advertising, are ruining the exchange relationships of information online. And he suggests to his audience, CMOS and ad agencies representing some of the top companies in the world, that they need to help bring the change, or suffer the consequences of an eroding trust in the digital landscape.

Randall Rothenberg went on in his truly inspiring speech, to elaborate the important role advertisers and marketers now find themselves playing. He outlined the myriad ways algorithms, big-data, and the eco-systems that prop up digital advertising, are ruining the exchange relationships of information online. And he suggests to his audience, CMOS and ad agencies representing some of the top companies in the world, that they need to help bring the change, or suffer the consequences of an eroding trust in the digital landscape.

Looking back now, Rothenberg’s speech takes on a Nostradamic hue of prophecy.

Marketers and advertisers have unwittingly taken public discourse and the connected community of the Internet into a navel-gazing, filter-bubble filled, truth-destroying, civilization-shaking, death spiral. And to pull out of it, we have to realize our place in the cockpit, and understand how we got here.

Entertain me – what’s the worst that could happen?

A group of our marketing and advertising colleagues working with the Data and Marketing Association literally stood up and cheered as they were on hand to witness Congress, then the Senate, then the President, peel back Obama-era protections/regulations, allowing ISPs to access and distribute consumer data, browsing history, in-app messages, and emails, to third-party companies for profit, all without consumer consent?

The interested parties have struck a deal – people love relevant ads!

When the vote passed the Senate, on March 23, 2017, we heard this from Emmett O’Keefe, SVP of Advocacy at the Data and Marketing Association:

“Today’s vote in the Senate and expected approval in the House signal that our nation’s top policymakers recognize that our current system of responsible data use works.”

The trust was apparently so overflowing, and the data of the marketplace was handled so responsibly, that the same day, these US companies followed the lead of brands in the UK, and pulled entirely out of Google & Youtube digital display advertising agreements, resulting in a loss of hundreds of millions of dollars for the digital ad giant….

- AT&T

- Beam Suntory Inc.

- Dish Network

- Enterprise

- FX Networks

- General Motors

- GSK

- Johnson & Johnson

- Nestle

- PepisCo

- Starbucks

- Verizon

- Walmart

These companies, “unknowingly,” were buying programmatic ad-placements that were being paired up with hateful content on Google & YouTube – a Snickers pop-up under an ISIS beheading, or Nazi propaganda brought to you by Mercedes. Truth be told, digital ads have always been placed wherever they can be, and who cares where they go cos they are cheap as dirt! You got the impressions/clicks/views – digital advertising, accomplished. So we spend a few bucks on digital display ads – What’s the worst that could happen?

One of the Managing Directors at Edelman PR, Gavin Coombes, has a quote that sets us up perfectly for the next slippery slope we need to slide down – – –

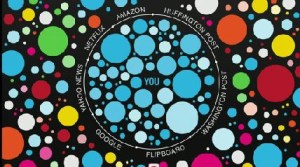

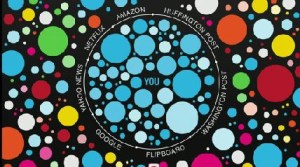

“As Internet-based communication has become used more often and by more people, we have found ourselves in the paradoxical circumstance of more information arguably leading to less understanding. The “echo chamber” – identified in the latest Edelman Trust Barometer as a major factor in feeding fear and distrust of institutions – is a phenomenon that reached a tipping point in 2016 and with potentially epochal implications. And, seemingly, without warning.”

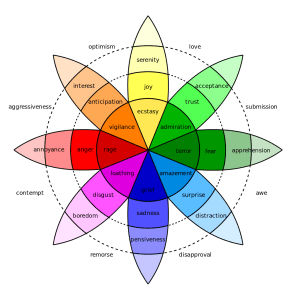

Coombes goes on to explain that social media operates more like tabloid media, vs traditional mass media – using content that entertains or connects emotionally, rather than content that empirically informs. Users prefer, and come to rely on, a steady diet of things that are happy, sad, funny or violent. This diet is typically filled with people like them and based on info they provide freely. So your social media stream is hand-selected by algorithms owned and operated by the social media ecosystems – always designed to keep the most engaging content in front of you, forever regenerating, endlessly attached to advertising revenue, open to marketers of all stripes.

In 2014, it was revealed that Facebook was able to manipulate users emotions depending on what posts a tailored algorithm allowed onto their “Wall.” Positive posts on a user’s wall were shown to illicit more positive posts in return, conversely, exposure to negativity promoted the sharing of negative material . Facebook performed it’s experiment without any user consent, and since the Terms and Conditions covered the unfettered access to user data, it was all above-the-board. Google and Yahoo both follow this same protocol – provide a seemingly “free” product, observe usage, get as much data as possible, get advertising content, and tweak the delivery to get the “relevant” info in front of the right people.

The reaction to Facebook’s experiment from the marketing and advertising community was shock and hidden joy. Here they had proof of a proper approach to gaming Facebook – emotions spread, and targeted, relevant emotions triggered at the right time can cause action. Proof that information, if properly placed at the right time, could affect change. Facebook’s algorithms keep it’s growing 1.7 billion user base glued to the platform – showing them whatever keeps them engaged, and not too pissed off – and all the while, user data is shared with anyone willing to pay for it.

So what? Marketers and advertisers get to sell soap to people they know like soap. We might use emotional triggers to get people to take action, but it’s with babies and puppies. It’s all good – What could possibly go wrong?

For those unfamiliar with the rising prominence of the newest and least experienced player to step on the field of international diplomacy on behalf of the United States, Jared Kushner – or how the former real-estate mogul turned dad-in-law-Trump’s campaign around by scaling existing marketing technology and “expertly” manipulated the filter-bubbles and emotional triggers of social and search….

For those unfamiliar with the technology he purchased through Cambridge Analytica, and how that company can provide it’s clients, through their OCEAN targeting, info on users political affiliation, race, gender, neuroticism, conscientiousness, openness and other psychological/emotional triggers……

For those unfamiliar with Cambridge Analytica’s parent company, SCL Group, which, since 1993, has made its name providing “psychological operations” for political campaigns around the world, marketing its services to militaries and state security agencies, providing impeccable, highly- targeted, and politically-weaponized disinformation campaigns to such countries as Pakistan, and Great Britain….

For those unaware of the role of mega-hedge-fund-lord Robert Mercer in funding Breitbart, Brexit, his huge investment in Cambridge Analytica both in the UK and US, his not-so-shadow-funding of pro-Trump media blitzes through the new non-profit media company “Making America Great Again,” and his insane amount of influence in the de-globalizing, anti-intellectual, climate-denying, xenophobic, media-exploding, war-mongering shit show we are currently living through…….

You should look into this stuff. What’s the worst that could happen?

This quote from Professor Jonathan Rust, director at Cambridge University’s Psychometric Centre, can help fit the final piece of our puzzle –

“The danger of not having regulation around the sort of data you can get from Facebook and elsewhere is clear. With this, a computer can actually do psychology, it can predict and potentially control human behaviour. It’s what the scientologists try to do but much more powerful. It’s how you brainwash someone. It’s incredibly dangerous.

“It’s no exaggeration to say that minds can be changed. Behaviour can be predicted and controlled. I find it incredibly scary. I really do. Because nobody has really followed through on the possible consequences of all this. People don’t know it’s happening to them. Their attitudes are being changed behind their backs.”

So what should/can marketers and advertisers do about this?

Looking at the above information, the marketer and the advertiser can see several opportunities. Opportunities for more user-generated data, enhanced abilities to track and deliver personalized ads on behalf of brands, nuanced insight into consumer behavior, ways to sneak our messages through emotional pathways, unlimited access to centralized audiences, and access to an ever-expanding marketplace.

Or are the opportunities aligned with a larger civic duty, not just towards our profit margins, but to our society, our fellow humans?

- Could advertising, done the right way, save the world?

- Can we open the conversation with our digital marketing companies about ways we can fight ad-fraud together?

- Can we hold our marketing and advertising associations accountable for their Code of Ethics, and be active, ethical allies for conscientious consumers?

- Can we work to protect the marketplaces and technology that enable ethically and mutually agreed-upon exchange relationships from “bad actors” or manipulative entities?

- Can we not sell everyone’s private data up the damned river, just so we can send them “better ads?”

Whatever your stance on ethics and morality and marketplace logic and free-will, we have to realize that while we’ve been engineering the latest and greatest ways to sell stuff in our marketplaces, we’ve also greased the tracks for a whole host of nefarious players to enter into these spaces, use our marketing technology of demand-engineering, targeted behavior modification, and etc., and these sinister forces are inflicting serious harm to the information eco-systems so necessary to our livelihoods and the continuation of modern civilization.

I’ll leave you with a final quote from Rothenberg, and it’s a perfect ending for this rant of an article, because it’s how he ended his speech, mic drop style.

“. . . now I am asking you to reach higher, and deeper into your own better nature. The values we hold dear – diversity, freedom of speech and religion, freedom of enterprise – are under assault, and digital marketing, advertising, media, and technology companies bear some measure of responsibility. The route from self-interested “standards” to fraudulent ads to blind-eyed negligence to the financing of criminal activities to support for hatred is clear, and it is direct.

What we say here – and what we do here – makes a difference. Please leave this conference with this understanding: You have the power to move fast and fix things. You have the ability to repair our credibility. You have the power to rebuild the trust. Thank you.”

So are you a marketer that is willing to stand up and make the world a better, more trusting place? If you rise to the occasion and exhibit the best of what humanity has to offer, at least in a marketer, what’s the worst that could happen?

Emotional Intelligence, the latest buzzword to fly through marketing’s living room, has all the promising language of a meaningful progress in the march towards moneymaking – and none of the ethical foresight, or foresmell, to realize the manipulative sh*tstorm it unleashes on the world.

Emotional Intelligence, the latest buzzword to fly through marketing’s living room, has all the promising language of a meaningful progress in the march towards moneymaking – and none of the ethical foresight, or foresmell, to realize the manipulative sh*tstorm it unleashes on the world.

Given the current state of media manipulation and

Given the current state of media manipulation and

Randall Rothenberg went on in his truly inspiring

Randall Rothenberg went on in his truly inspiring